[ad_1]

At this time signifies a significant achievement for your complete quantum ecosystem: Microsoft and Quantinuum demonstrated essentially the most dependable logical qubits on report. By making use of Microsoft’s breakthrough qubit-virtualization system, with error diagnostics and correction, to Quantinuum’s ion-trap {hardware}, we ran greater than 14,000 particular person experiments with no single error. Moreover, we demonstrated extra dependable quantum computation by performing error diagnostics and corrections on logical qubits with out destroying them. This lastly strikes us out of the present noisy intermediate-scale quantum (NISQ) stage to Stage 2 Resilient quantum computing.

This can be a essential milestone on our path to constructing a hybrid supercomputing system that may remodel analysis and innovation throughout many industries. It’s made potential by the collective development of quantum {hardware}, qubit virtualization and correction, and hybrid purposes that reap the benefits of the very best of AI, supercomputing, and quantum capabilities. With a hybrid supercomputer powered by 100 dependable logical qubits, organizations would begin to see scientific benefit, whereas scaling nearer to 1,000 dependable logical qubits would unlock industrial benefit.

Superior capabilities primarily based on these logical qubits will likely be accessible in personal preview for Azure Quantum Components clients within the coming months.

A purpose-built computing platform for science

Lots of the hardest issues dealing with society, comparable to reversing local weather change, addressing meals insecurity and fixing the power disaster, are chemistry and supplies science issues. Nonetheless, the variety of potential steady molecules and supplies could surpass the variety of atoms within the observable universe. Even a billion years of classical computing can be inadequate to discover and consider all of them.

That’s why the promise of quantum is so interesting. Scaled quantum computer systems would provide the power to simulate the interactions of molecules and atoms on the quantum stage past the attain of classical computer systems, unlocking options that may be a catalyst for constructive change in our world. However quantum computing is only one layer for driving these breakthrough insights.

Whether or not it’s to supercharge pharma productiveness or pioneer the following sustainable battery, accelerating scientific discovery requires a purpose-built, hybrid compute platform. Researchers want entry to the proper device on the proper stage of their discovery pipeline to effectively clear up each layer of their scientific downside and drive insights into the place they matter most. That is what we constructed with Azure Quantum Components, empowering organizations to rework analysis and growth with capabilities together with screening large knowledge units with AI, narrowing down choices with high-performance computing (HPC) or enhancing mannequin accuracy with the ability of scaled quantum computing sooner or later.

We proceed to advance the state-of-the-art throughout all these hybrid applied sciences for our clients, with at this time’s quantum milestone laying the inspiration for helpful, dependable and scalable simulations of quantum mechanics.

Transferring towards resilience

In an article I wrote on LinkedIn, I used a ‘leaky boat’ analogy to elucidate why constancy and error correction are so necessary to quantum computing. Briefly, constancy is the worth we use to measure how reliably a quantum pc can produce a significant end result. Solely with good constancy will now we have a strong basis to reliably scale a quantum machine that may clear up sensible, real-world issues.

For years, one method used to repair this leaky boat has been to extend the variety of noisy bodily qubits along with methods to compensate for that noise however falling in need of actual logical qubits with superior error correction charges. The primary shortcoming of most of at this time’s NISQ machines is that the bodily qubits are too noisy and error-prone to make sturdy quantum error correction potential. Our trade’s foundational elements usually are not adequate for quantum error correction to work, and it’s why even bigger NISQ methods usually are not sensible for real-world purposes.

The duty at hand for your complete quantum ecosystem is to extend the constancy of qubits and allow fault-tolerant quantum computing in order that we are able to use a quantum machine to unlock options to beforehand intractable issues. Briefly, we have to transition to dependable logical qubits — created by combining a number of bodily qubits collectively into logical ones to guard in opposition to noise and maintain a protracted (i.e., resilient) computation. We will solely acquire this with cautious {hardware} and software program co-design. By having high-quality {hardware} elements and breakthrough error-handling capabilities designed for that machine, we are able to get higher outcomes than any particular person part may give us. At this time, we’ve achieved simply that.

“Breakthroughs in quantum error correction and fault tolerance are necessary for realizing the long-term worth of quantum computing for scientific discovery and power safety. Outcomes like these allow continued growth of quantum computing methods for analysis and growth.”

Dr. Travis Humble, Director, Quantum Science Middle, Oak Ridge Nationwide Laboratory

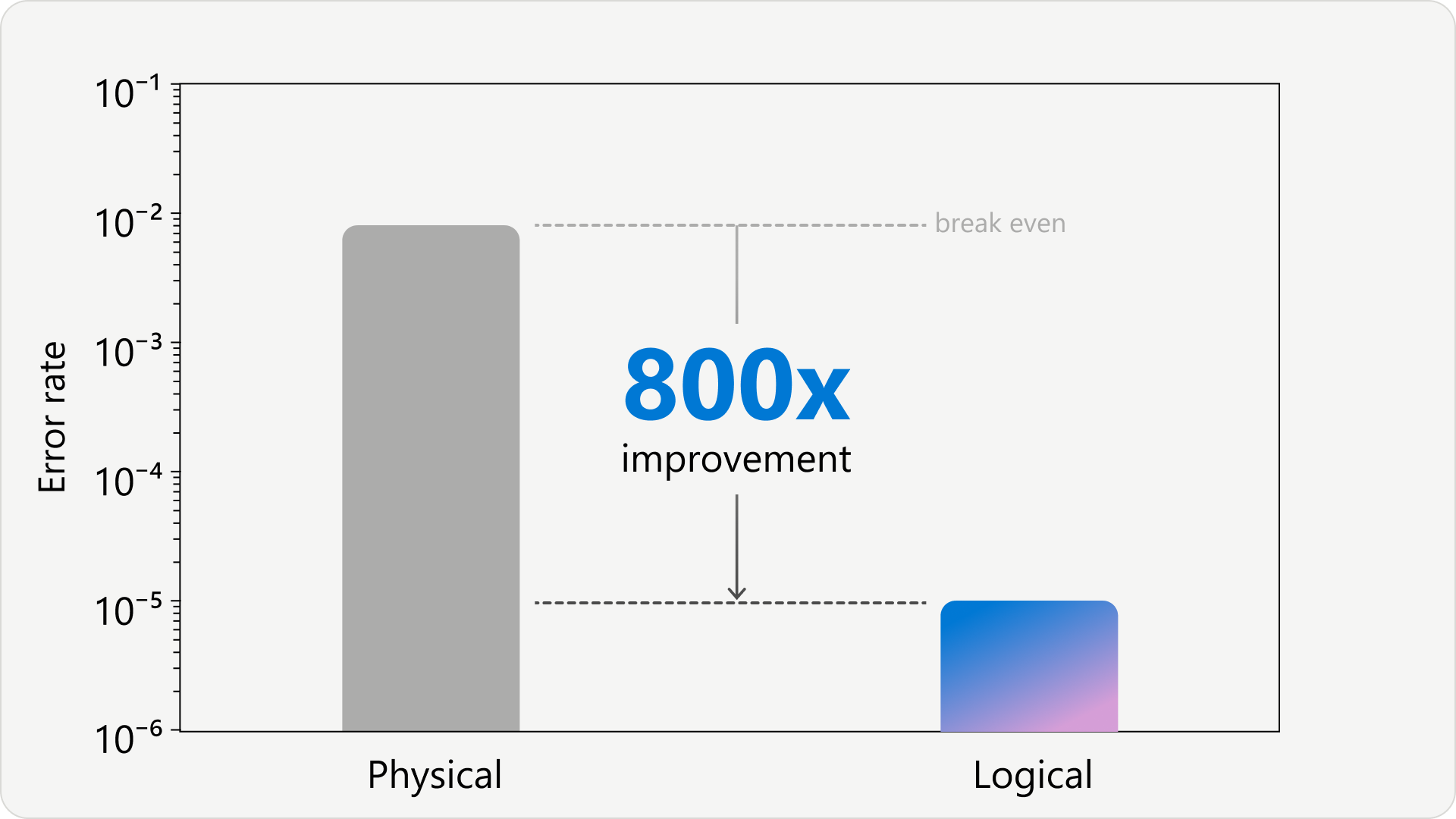

A breakthrough for dealing with quantum errors

That’s why at this time is such a historic second: for the primary time on report as an trade, we’re advancing from Stage 1 Foundational to Stage 2 Resilient quantum computing. We’re now coming into the following part for fixing significant issues with dependable quantum computer systems. Our qubit-virtualization system, which filters and corrects errors, mixed with Quantinuum’s {hardware} demonstrates the largest hole between bodily and logical error charges reported thus far. That is the primary demonstrated system with 4 logical qubits that improves the logical over the bodily error charge by such a big order of magnitude.

As importantly, we’re additionally now in a position to diagnose and proper errors within the logical qubits with out destroying them — known as “energetic syndrome extraction.” This represents an enormous step ahead for the trade because it allows extra dependable quantum computation.

With this method, we ran greater than 14,000 particular person experiments with no single error. You possibly can learn extra about these outcomes right here.

“Quantum error correction typically appears very theoretical. What’s placing right here is the large contribution Microsoft’s midstack software program for qubit optimization is making to the improved step-down in error charges. Microsoft actually is placing idea into observe.”

Dr. David Shaw, Chief Analyst, World Quantum Intelligence

An extended-standing collaboration with Quantinuum

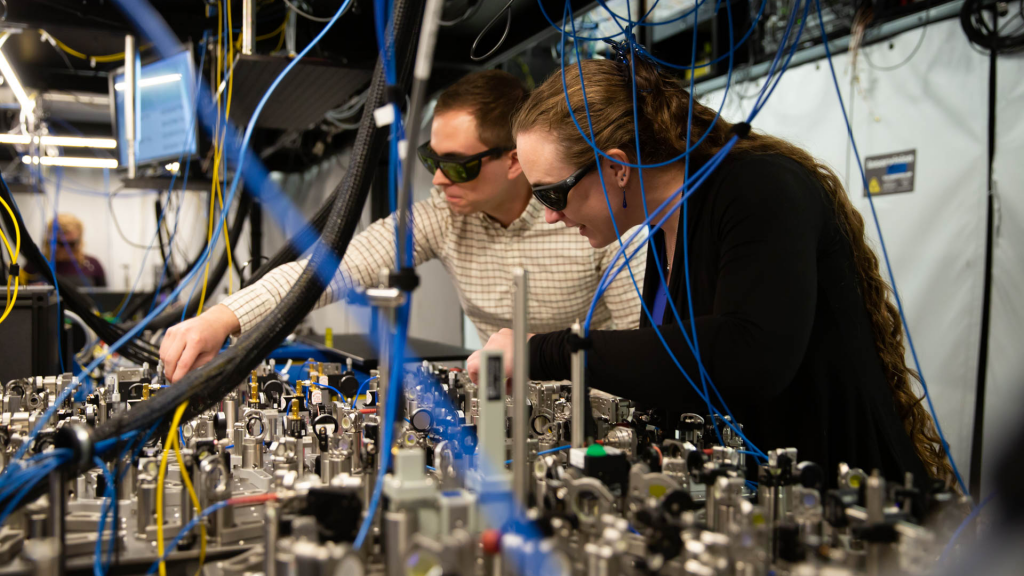

Since 2019, Microsoft has been collaborating with Quantinuum to allow quantum builders to jot down and run their very own quantum code on ion-trap qubit know-how which incorporates high-fidelity, full connectivity and mid-circuit measurements. A number of revealed benchmark exams acknowledge Quantinuum as having the very best quantum volumes, making them well-positioned to enter Stage 2.

“At this time’s outcomes mark a historic achievement and are an exquisite reflection of how this collaboration continues to push the boundaries for the quantum ecosystem. With Microsoft’s state-of-the-art error correction aligned with the world’s strongest quantum pc and a completely built-in method, we’re so excited for the following evolution in quantum purposes and may’t wait to see how our clients and companions will profit from our options particularly as we transfer in direction of quantum processors at scale.”

Ilyas Khan, Founder and Chief Product Officer, Quantinuum

Quantinuum’s {hardware} performs at bodily two-qubit constancy of 99.8%. This constancy allows utility of our qubit-virtualization system, with diagnostics and error correction, and makes at this time’s announcement potential. This quantum system, with co-innovation from Microsoft and Quantinuum, ushers us into Stage 2 Resilient.

Pioneering quantum supercomputing, collectively

At Microsoft, our mission is to empower each particular person and group to attain extra. We’ve introduced the world’s greatest NISQ {hardware} to the cloud with our Azure Quantum platform so our clients can embark on their quantum journey. Because of this we’ve built-in synthetic intelligence with quantum computing and cloud HPC within the personal preview of Azure Quantum Components. We used this platform to design and reveal an end-to-end workflow that integrates Copilot, Azure compute and a quantum algorithm operating on Quantinuum processors to coach an AI mannequin for property prediction.

At this time’s announcement continues this dedication by advancing quantum {hardware} to Stage 2. Superior capabilities primarily based on these logical qubits will likely be accessible in personal preview for Azure Quantum Components within the coming months.

Lastly, we proceed to take a position closely in progressing past Stage 2, scaling to the extent of quantum supercomputing. Because of this we’ve been advocating for our topological method, the feasibility of which our Azure Quantum workforce has demonstrated. At Stage 3, we anticipate to have the ability to clear up a few of our most difficult issues, notably in fields like chemistry and supplies science, unlocking new purposes that carry quantum at scale along with the very best of classical supercomputing and AI — all related within the Azure Quantum cloud.

We’re excited to empower the collective genius and make these breakthroughs accessible to our clients. For extra particulars on how we achieved at this time’s outcomes, discover our technical weblog, and register for the upcoming Quantum Innovator Sequence with Quantinuum.

[ad_2]

Source link