[ad_1]

REVERB is a documentary collection from CBS Experiences. Watch the newest episode, “A Metropolis Beneath Surveillance,” within the video participant above.

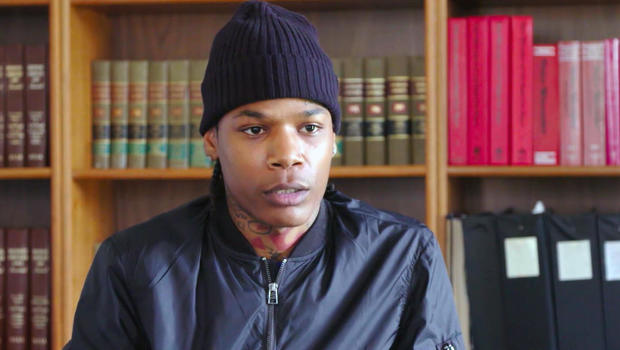

In July of 2019, Michael Oliver, 26, was on his solution to work in Ferndale, Michigan, when a cop automobile pulled him over. The officer knowledgeable him that there was a felony warrant out for his arrest.

“I believed he was joking as a result of he was laughing,” recalled Oliver. “However as quickly as he took me out of my automobile and cuffed me, I knew this wasn’t a joke.” Shortly thereafter, Oliver was transferred to Detroit police custody and charged with larceny.

Months later, at a pre-trial listening to, he would lastly see the proof towards him — a single screen-grab from a video of the incident, taken on the accuser’s cellphone. Oliver, who has an oval-shaped face and a lot of tattoos, shared few bodily traits with the individual within the picture.

“He appeared nothing like me,” Oliver mentioned. “He did not even have tattoos.” The choose agreed, and the case was promptly dismissed.

CBS Information

It was practically a yr later that Oliver realized his wrongful arrest was based mostly on an misguided match utilizing controversial facial recognition know-how. Police took a nonetheless picture from a video of the incident and ran it by way of a software program program manufactured by an organization referred to as DataWorks Plus. The software program measures numerous factors on an individual’s face — the house between their eyes, the slope of their nostril — to generate a novel “face print.” The Detroit Police Division then checks for a doable match in a database of photographs; the system can entry 1000’s of mugshots in addition to the Michigan state database of driver’s license photographs.

Oliver’s face got here up as a match, however it wasn’t him, and there was loads of proof to show it wasn’t.

Facial recognition know-how has change into commonplace in our society. It is used to unlock our cellphones and to boost airport safety. However many view it as flawed know-how that has the potential to trigger severe hurt. It misidentifies Black and Brown faces at charges considerably increased than their White counterparts, in some circumstances practically 100% of the time. And that is particularly related in a metropolis like Detroit, the place practically 80% of residents are Black.

“I feel the notion that information is impartial has gotten us into plenty of bother,” mentioned Tawana Petty, a digital activist who works with the Detroit Fairness Motion Lab. “The algorithms are programmed by White males utilizing information gathered from racist insurance policies. It is replicating racial bias that begins with people after which is programmed into know-how.”

Petty factors out that greater than a dozen different cities, together with San Francisco and Boston, have banned such know-how due to considerations about civil liberties and privateness. She has advocated for the same ban in Detroit. As an alternative, the town voted in October to resume the contract with DataWorks.

Even supporters acknowledge that the system has a excessive error charge. Detroit Police Chief James Craig mentioned in June, “If we have been simply to make use of the know-how by itself, to establish somebody” — which he famous can be towards division coverage — “I’d say 96% of the time it will misidentify.”

CBS Information

Oliver’s case follows the wrongful arrest of Robert Williams, 42, who was additionally arrested in 2019 for a criminal offense he didn’t commit, based mostly on the identical algorithmic software program.

Petty believes that with this know-how the human prices far outweigh the advantages. “Sure, innovation is inevitable,” she mentioned. “However this would not be the primary time we have pulled again on one thing that we notice wasn’t good for the better of humanity.”

Oliver nonetheless hasn’t recovered from the fallout of his wrongful arrest. “I missed work for trial dates. Then I misplaced my job.” With out an earnings, he could not make hire on his house or automobile funds, and shortly he misplaced these too. A yr later he’s successfully homeless, couch-surfing with family and friends, and desperately looking for a brand new job.

In July, Oliver filed a lawsuit towards the town of Detroit for a minimum of $12 million. The lawsuit accuses Detroit Police of utilizing “failed facial recognition know-how realizing the science of facial recognition has a considerable error charge amongst black and brown individuals of ethnicity which might result in the wrongful arrest and incarceration of individuals in that ethnic demographic.”

The police division has acknowledged that the lead investigator on the case did not carry out the due diligence they need to have earlier than making the arrest.

“We despatched the picture to the detective. However then from there, the detective has to exit and really have a look at the picture and examine it to some other info,” mentioned Andrew Rutebuka, head of the Crime Intelligence Unit that makes use of the know-how. Investigators are educated to comply with the information, as they might in some other case, reminiscent of confirming the whereabouts of the individual on the time the crime occurred, or matching some other information. “The software program simply supplies the lead,” mentioned Rutebuka. “The detective has to do their place of the work and really comply with up.”

Since Oliver’s arrest, the Detroit Police Division has revised its coverage on utilizing facial recognition software program. Now it could solely be used within the occasion of a violent crime. Detroit has the fourth highest homicide charge within the nation and the division maintains that this system helps to unravel circumstances that might in any other case go chilly.

Some native households assist the know-how. Marsheda Holloway credit the system with serving to unravel the homicide of her 29-year-old cousin Denzel by retracing the place he went and who he was with earlier than he was killed.

“I by no means need one other household to undergo what me and my household went by way of,” she mentioned. “If facial recognition might help, all of us want it. Everyone wants one thing.”

However Michael Oliver is not certain the software program is value it. “I used to have the ability to care for my household,” he mentioned. “I would like my outdated life again.”

Advocates consider there are dozens of comparable circumstances of wrongful arrests, however they’re tough to establish as a result of the police division is not required to share when matches have been made utilizing facial recognition software program.

“Hopefully I win my case,” Oliver mentioned. “I do not need anybody else to undergo this.”

[ad_2]

Source link